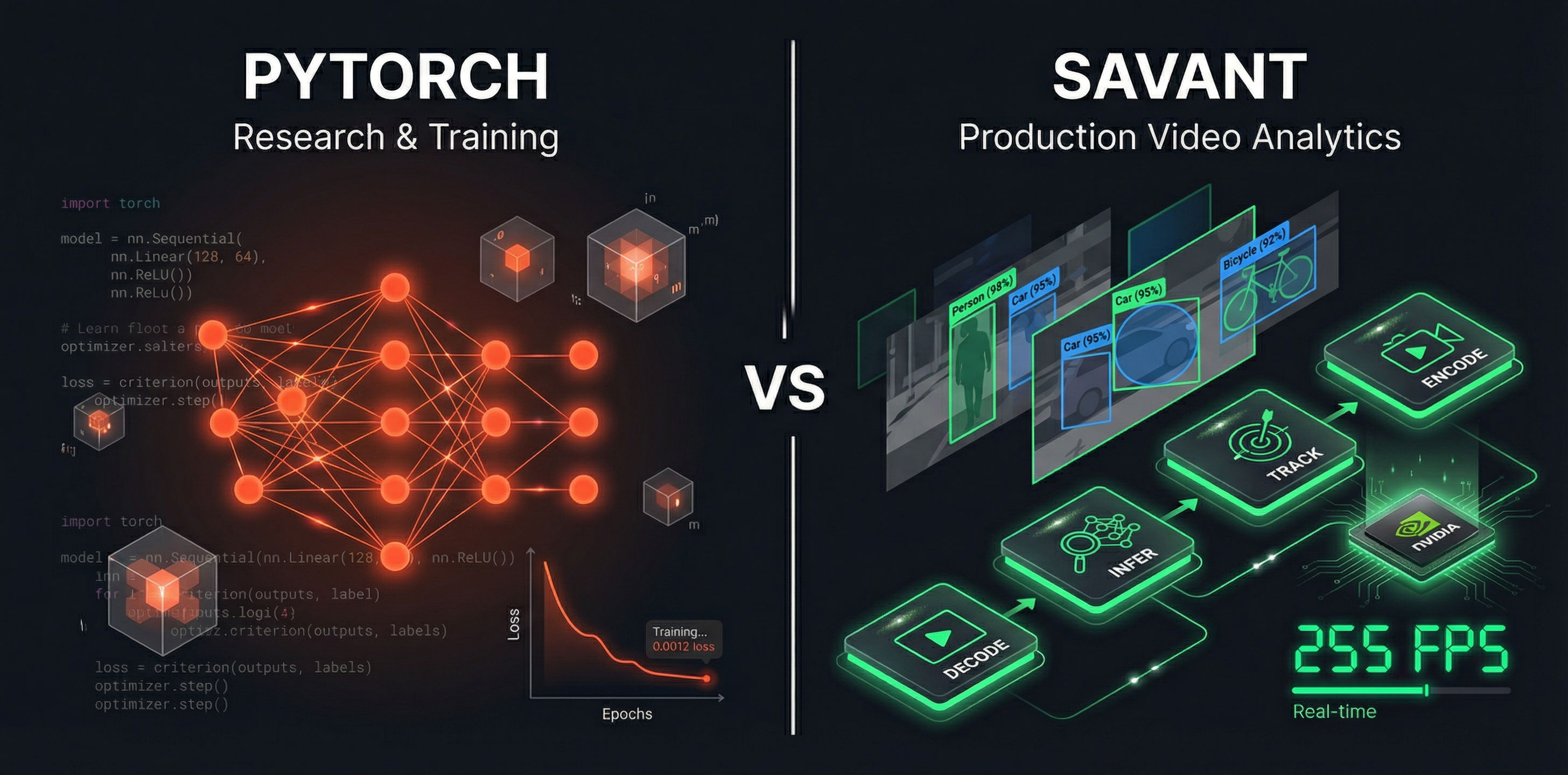

Savant vs. PyTorch: Purpose, Architecture, and When to Use Each

PyTorch engineers know that PyTorch can do inference — and it can. A model trained in PyTorch can be evaluated on a GPU in a handful of lines, results come back quickly, and the whole thing feels familiar and controllable. So when the task shifts from training to deploying a video analytics system, the natural instinct is to reach for the same tool: add a video decoding step, wrap the model call in a loop, ship it.

This is where the problems start. Not because PyTorch is a bad tool — it is an excellent one — but because video analytics production systems impose constraints that PyTorch’s design does not address: sustained multi-stream throughput, hardware-accelerated decoding, GPU memory residency across pipeline stages, multi-model composition, latency control, and operational observability. Using PyTorch for this job is possible, but it means building all of that infrastructure yourself, usually discovering the hard way that naive implementations saturate PCIe bandwidth long before the GPU’s inference capacity is reached.

This article explains what those constraints are, why they exist, how Savant was built to address them specifically, and when each tool — or both together — is the right choice.

Design Philosophy: Research Flexibility vs. Production Serving

PyTorch

PyTorch was created by Meta AI Research and released in 2016 as a replacement for Torch, designed from the ground up to serve the needs of machine learning researchers. Its core design principle is dynamic computation graphs (define-by-run), which makes it easy to write and debug models imperatively, iterate quickly on architectures, and explore novel ideas without restructuring code.

PyTorch excels at:

- Defining, training, and validating neural network architectures

- Rapid prototyping with interleaved Python control flow and tensor operations

- Research experimentation where model topology changes frequently

- Exporting models to deployment-ready formats (TorchScript, ONNX, TensorRT)

This design philosophy makes PyTorch extraordinarily productive for researchers and ML engineers who are iterating toward a model that works. It is a library — it provides primitives and you compose them into whatever architecture you need.

Savant

Savant was built on a fundamentally different premise: a production video analytics deployment is not a research problem. Once the model is trained, a completely different set of challenges emerges — challenges that PyTorch was never designed to address.

Savant is a framework, not a library. This distinction matters. A library provides utilities your code calls; a framework calls your code and defines the structure within which it operates. Savant defines the pipeline topology, memory management model, data flow architecture, transport protocol, and deployment strategy. You provide the domain logic — model configuration, custom processing functions, inference post-processing — and Savant ensures it runs correctly and efficiently in production.

Savant is built on NVIDIA DeepStream, which is itself built on GStreamer. It inherits DeepStream’s hardware-accelerated video processing pipeline while adding a Python-first API, production tooling, and a modular service architecture that makes DeepStream’s capabilities accessible without requiring expertise in GStreamer internals or C++.

Architectural Differences

The architectural differences between PyTorch and Savant pipelines are substantial and directly determine throughput, latency, and hardware utilization.

Data Flow Model

A typical PyTorch video inference implementation follows a sequential, frame-by-frame model: decode a frame, transfer it to GPU memory, run inference, retrieve results, process the next frame. Each stage waits for the previous one to complete. The CPU and GPU alternate between active and idle states, and the hardware — including specialized NVDEC and NVENC units — is used only a fraction of the time.

Savant inherits DeepStream’s parallel pipeline model built on GStreamer’s data flow architecture. Video decoding (NVDEC), inference (TensorRT), and video encoding (NVENC) run as concurrent pipeline stages. As soon as a hardware block finishes processing a frame, it immediately begins on the next. CPU and GPU operate in parallel rather than in sequence. This parallelism is not something that can be easily reproduced in Python with threading — it is an intrinsic property of the GStreamer pipeline model.

Memory Motion Model

This is where the performance difference is most dramatic. In a PyTorch pipeline:

- Compressed video is read from disk or network

- Decoded on the CPU into raw RGB or YUV frames (large memory footprint)

- Converted to a NumPy array or PIL image

- Transferred over PCIe from CPU RAM to GPU VRAM

- Inference runs on GPU

- Results are transferred back to CPU

Steps 2–4 represent substantial overhead. A single second of 1080p video at 30 FPS produces approximately 180 MB of uncompressed frame data. The same content encoded in H.264 occupies roughly 2 MB. Sending 180 MB across PCIe instead of 2 MB saturates the bus long before the GPU’s inference capacity is reached.

In Savant’s pipeline, compressed video is uploaded to GPU memory in its encoded form. NVDEC — a dedicated hardware block that does not share resources with CUDA cores — decodes frames directly into GPU memory. The decoded frames remain in GPU memory for the entire pipeline: preprocessing, inference, postprocessing, and optional re-encoding all happen without leaving the GPU. The CPU processes only lightweight metadata such as bounding boxes, confidence scores, and class labels.

This memory motion model is one of the primary reasons Savant delivers more than 3× the throughput of standard PyTorch pipelines for the same model on equivalent hardware. In a published benchmark running YOLOv8M FP16 on an RTX 2080:

| Implementation | Throughput |

|---|---|

| PyTorch CUDA + OpenCV (SW decode) | 75 FPS |

| PyTorch CUDA + hardware decode | 107 FPS |

| Savant TensorRT + hardware decode | 255 FPS |

The 107 FPS figure for PyTorch with hardware decode demonstrates that switching the decode stage alone yields a meaningful improvement. The further jump to 255 FPS in Savant comes from combining hardware decode with TensorRT-optimized inference and the GStreamer-based parallel pipeline model.

Inference Engine

PyTorch’s native inference engine is flexible and supports many backends — CPU, CUDA, ROCm, and others. This flexibility serves research use cases well. For production deployment on NVIDIA hardware, PyTorch can be paired with TensorRT through torch.compile or TensorRT conversion, but this integration adds complexity and requires additional engineering work.

Savant uses TensorRT natively as its primary inference engine, with no additional integration work required. Models are declared in pipeline configuration files with supported precision (FP32, FP16, INT8) and batch sizes, and Savant manages engine compilation, caching, and execution. For models not compatible with TensorRT, Savant supports a PyTorch inference path, allowing the two systems to complement each other within the same pipeline.

Multi-Model Pipeline Composition

Real-world video analytics systems rarely run a single model. A license plate recognition system runs a vehicle detector, a vehicle tracker, a plate detector, a plate character recognition model, and potentially a make/model classifier — all within the same frame processing cycle.

In PyTorch, composing multi-model pipelines requires explicit orchestration: for each input frame, call model A, filter its outputs, extract ROIs, call model B on those ROIs, aggregate results. This logic is application code, and it must be written, tested, and maintained.

In Savant, multi-model pipelines are declared in a YAML configuration file. Savant manages the execution order, GPU memory allocation, batch construction, and metadata flow between models. The framework handles object-level context automatically: secondary models automatically receive the detected objects from primary models as their input ROIs. The developer writes Python functions for pre- and post-processing logic, not pipeline orchestration.

Use Cases

Where PyTorch Is the Right Tool

Model training. This is PyTorch’s primary domain. No production video analytics framework replaces PyTorch for training detection, segmentation, classification, or tracking models. PyTorch’s dynamic graphs, automatic differentiation, and training ecosystem (Lightning, Hugging Face, etc.) are without peer.

Research and prototyping. When the goal is to explore a new architecture, validate a hypothesis, or evaluate a dataset, PyTorch’s flexibility is essential. There is no deployment overhead, no pipeline configuration to write — just a model and a training loop.

Low-throughput inference on images. For applications that process a moderate volume of individual images rather than continuous video streams — document OCR, medical image analysis, photo moderation — PyTorch with TorchServe or Triton Inference Server is a mature, well-supported choice.

Non-NVIDIA hardware. PyTorch supports CPU inference, AMD ROCm, Apple Metal, and other backends. Savant is NVIDIA-specific.

Academic and research environments. PyTorch is the de facto standard in the research community. Papers ship code in PyTorch; reproducibility requires it.

Where Savant Is the Right Tool

Real-time multi-camera video analytics. Systems that process live RTSP streams from multiple cameras or other live sources simultaneously require hardware-efficient pipelines. PyTorch’s per-frame processing model cannot sustain the throughput required; Savant’s batched, parallel pipeline model is designed exactly for this load.

Production deployment on NVIDIA edge and data center hardware. Savant pipelines run without code changes on Jetson Orin (edge), desktop GPUs (development and testing), and data center accelerators (T4, A10, H100). This hardware convergence removes a significant source of deployment complexity.

Multi-model inference pipelines. Person detection, ReID tracking, attribute classification, pose estimation — declaratively composed in a YAML pipeline, with metadata flowing automatically between stages. The developer writes Python post-processing functions; Savant handles the rest.

Glass-to-glass video applications. When the output is a re-encoded video stream with overlaid bounding boxes, labels, and metadata — as in surveillance, traffic monitoring, industrial QA, or broadcast — Savant provides hardware-accelerated NVENC encoding and a built-in drawing API (OpenCV CUDA, savant-rs). Frame-level visualization requires zero PCIe data movement.

Distributed and microservice architectures. Savant’s ZeroMQ-based streaming protocol and adapter model allow video pipelines to integrate natively with Kafka, Redis, RTSP, S3, and custom sources/sinks. Fan-out/fan-in topologies — where a single stream is distributed to multiple parallel inference modules and results are merged — are supported through the Router and Meta-merge services.

Systems requiring dynamic reconfiguration. Savant supports runtime parameter updates via etcd without pipeline restarts, enabling feedback-loop-driven systems where inference results change pipeline behavior — such as adjusting confidence thresholds based on a lighting estimation model.

Monitored production deployments. Savant provides native OpenTelemetry instrumentation with frame-level trace context propagated across services. Observability requires no additional integration work.

Target Audiences

PyTorch

- ML researchers building and publishing novel model architectures

- Data scientists exploring datasets, running experiments, and validating model quality

- ML engineers focused on training pipelines, data augmentation, and model evaluation

- Backend engineers serving prediction APIs over HTTP (images, documents, text)

- Any team working on non-NVIDIA hardware or requiring hardware portability

Savant

- Computer vision engineers building production systems that process live video at scale

- ML engineers transitioning from research to production who need to take a trained model and deploy it in a real-time pipeline without rebuilding the inference stack from scratch

- Systems engineers integrating vision systems into larger enterprise infrastructure (Kafka, Kubernetes, monitoring, alerting)

- Edge AI teams targeting Jetson Orin or similar NVIDIA edge devices for on-premise or in-vehicle deployment

- Teams building surveillance, smart city, industrial monitoring, or retail analytics products where hardware utilization efficiency and multi-stream throughput are commercial requirements

Using PyTorch and Savant Together

These tools are not mutually exclusive. The typical workflow is:

- Train and validate the model in PyTorch, using standard training infrastructure.

- Export the model to ONNX or TensorRT format.

- Deploy within a Savant pipeline, where TensorRT handles inference and Savant manages the video pipeline, multi-model composition, metadata, and deployment.

For models that are not TensorRT-compatible — due to unsupported operators or dynamic control flow — Savant supports a native PyTorch inference path. This allows PyTorch and TensorRT models to coexist within the same pipeline, with Savant managing the shared frame buffer, metadata context, and execution order.

The net result is that PyTorch continues to do what it does best — model development — while Savant handles what PyTorch was never designed for: operating at production throughput over continuous live video streams.

Summary

| Dimension | PyTorch | Savant |

|---|---|---|

| Primary purpose | Model training and research | Production video analytics serving |

| Design paradigm | Library (you define the structure) | Framework (structure is defined for you) |

| Inference engine | PyTorch native, optional TensorRT | TensorRT native, optional PyTorch |

| Video decoding | CPU (SW) by default, optional HW | Hardware (NVDEC) by default |

| Memory model | CPU decode → PCIe transfer → GPU | Encoded upload → NVDEC → stays in GPU |

| Data flow | Sequential, frame-by-frame | Parallel, GStreamer-based pipeline |

| Multi-model support | Manual orchestration | Declarative YAML composition |

| Hardware scope | CPU, CUDA, ROCm, Metal, others | NVIDIA GPU and Jetson only |

| Deployment model | Custom or Triton/TorchServe | Containerized, ZeroMQ protocol, Kubernetes-ready |

| Observability | External integration required | Native OpenTelemetry |

| Target user | ML researcher, data scientist | CV/ML production engineer |

The confusion between PyTorch and Savant arises because they share surface-level characteristics: Python, NVIDIA GPUs, neural network inference. But the problems they were built to solve are at opposite ends of the ML lifecycle. PyTorch is where models are created; Savant is where they operate in the world. Neither tool makes the other obsolete — and for teams building serious computer vision products, both are typically present.

Interested in benchmarks? See Savant is More Than Three Times Faster Than PyTorch in Video Inference. For a broader comparison of the computer vision technology landscape, see Choosing the Technology for a Computer Vision Product in 2025.