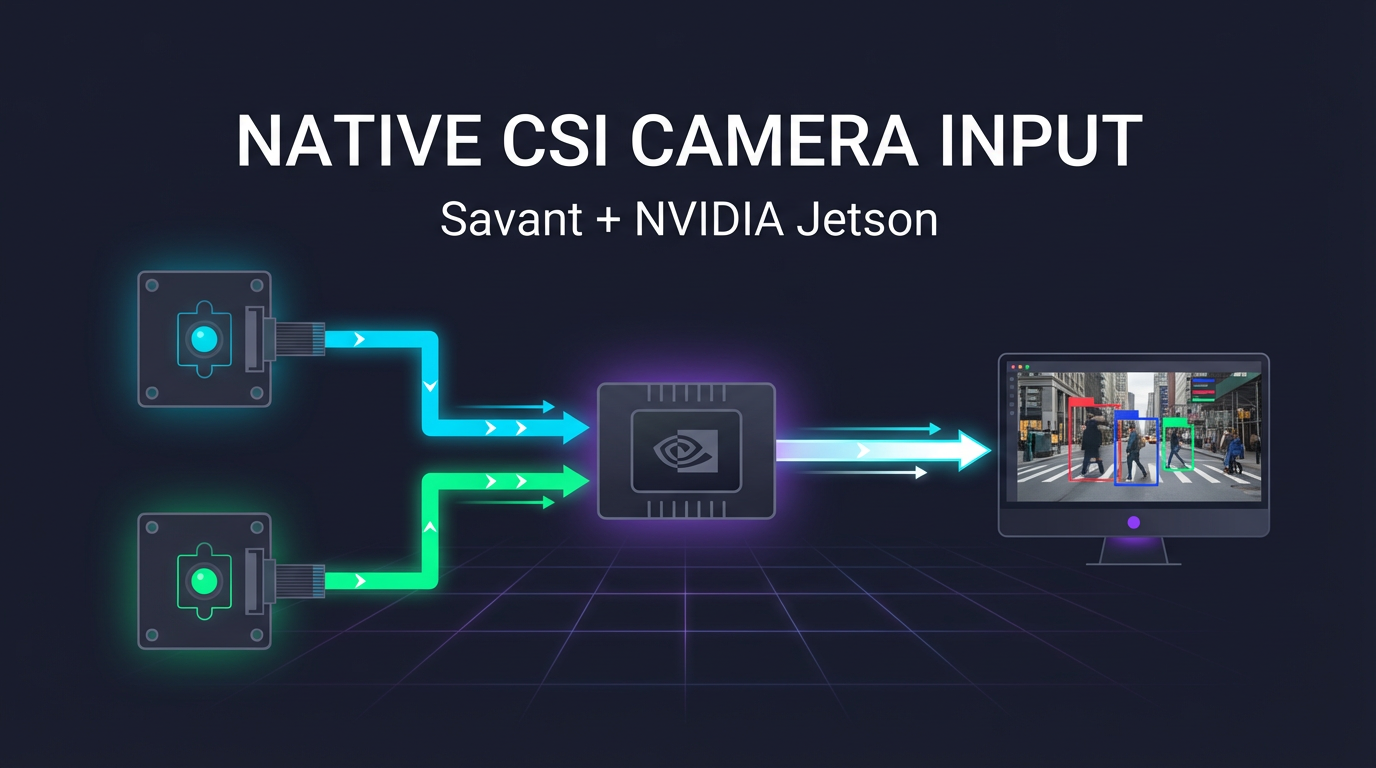

Native CSI Camera Input for Savant on NVIDIA Jetson

Embedded vision and robotics applications on NVIDIA Jetson have a simple requirement: get pixels from the camera sensor to GPU inference as fast as possible. Every millisecond of latency matters when a robot arm is tracking an object, a drone is avoiding obstacles, or an AGV is navigating a warehouse floor.

Starting today, Savant supports native CSI camera input through a new nvarguscamerasrc_bin pipeline source element. You declare your cameras in the module YAML, and Savant handles the rest — no adapters, no sidecar containers, no glue scripts.

The Shortest Path from Sensor to Inference

With MIPI CSI-2 cameras on Jetson, the image sensor delivers raw pixel data over a dedicated hardware bus. The Jetson’s ISP (Image Signal Processor) debayers, denoises, and tone-maps the raw stream, producing frames directly in NVMM (NVIDIA Multimedia Memory) — GPU-accessible memory that DeepStream and TensorRT can consume without a single extra copy.

Compare this to what happens with a network camera:

| Step | RTSP Camera | CSI Camera (native) |

|---|---|---|

| Capture | Sensor → camera SoC | Sensor → Jetson ISP |

| Encoding | H.264/HEVC on camera | None needed |

| Transport | RTP/UDP over Ethernet | MIPI bus (hardware) |

| Decoding | NVDEC on Jetson | None needed |

| Memory | Copy to NVMM | Already in NVMM |

With an RTSP camera, frames are encoded on the camera, serialized over the network, decoded on the Jetson, and only then placed into GPU memory. With a CSI camera and the new nvarguscamerasrc_bin, raw frames arrive in NVMM directly from the ISP — zero encoding, zero network stack, zero decoding. This is the most efficient data path available on Jetson hardware.

For robotics and embedded applications where latency budgets are measured in single-digit milliseconds, this difference is not academic — it determines whether the system can react in real time.

Why CSI Cameras Fit Embedded and Robotics

We previously covered the advantages of CSI cameras over RTSP in detail. The short version: CSI cameras are purpose-built for the constraints of embedded systems.

- Deterministic latency. No network jitter, no encoder buffering, no decode queue. Frame delivery timing is governed by hardware, not software stacks.

- Power efficiency. No Ethernet PHY, no camera SoC running a full Linux stack. CSI cameras draw a fraction of the power of an IP camera — critical for battery-powered robots and drones.

- Mechanical simplicity. A flat flex cable replaces Ethernet cabling and PoE injectors. Cameras can be mounted in tight enclosures where an RJ-45 connector would not fit.

- Custom optics. Industrial CSI camera modules accept interchangeable lenses, IR filters, and global-shutter sensors — letting you tailor the optical system to the task rather than accepting whatever a CCTV camera ships with.

- Multi-camera synchronization. Stereo vision and multi-view stitching require frame-level sync that RTSP simply cannot guarantee. CSI hardware triggers can synchronize multiple sensors to the same exposure window.

Until now, using CSI cameras with Savant required an external adapter process to capture frames and push them into the pipeline over ZeroMQ. That extra hop reintroduced encoding and copying overhead, negating much of the CSI advantage. The new native input removes that bottleneck entirely.

Configuration

Declaring CSI cameras in your module YAML is straightforward:

name: my-csi-pipeline

parameters:

batch_size: 2

output_frame:

codec: jpeg # works on all Jetson models including Nano

#codec: hevc # use if you are on NX/AGX (NVENC required)

jpeg_encoder_params:

quality: 95

hevc_encoder_params:

profile: Main

bitrate: 8000000

pipeline:

source:

# use: v4l2-ctl -d /dev/video0 --list-formats-ext

# to determine camera formats and max fps

element: nvarguscamerasrc_bin

properties:

sources:

- source-id: camera-0

framerate: 25/1

properties:

sensor-id: 0

sensor-mode: 1

- source-id: camera-1

framerate: 10/1

properties:

sensor-id: 1

sensor-mode: 1

Each entry in the sources list maps to a physical CSI sensor. The source-id identifies the stream throughout the pipeline and in downstream sinks. The framerate sets the capture rate as a GStreamer fraction. The properties dict passes through directly to the underlying nvarguscamerasrc element — any property supported by the NVIDIA plugin (exposure, white balance, sensor mode, etc.) can be set per camera.

The output codec choice depends on your Jetson model and downstream requirements. JPEG is available on all Jetson platforms (including Orin Nano, which lacks a hardware HEVC encoder).

Running the Demo

A complete working sample is included in the Savant repository. It captures from two CSI cameras and streams the output to an Always-On RTSP sink:

git clone https://github.com/insight-platform/Savant.git

cd Savant

git lfs pull

# Ensure the Argus daemon is running

sudo systemctl start nvargus-daemon

# Launch the demo

docker compose -f samples/nvarguscamerasrc/docker-compose.l4t.yml up

Once running, open the streams in any RTSP-capable player:

rtsp://127.0.0.1:554/stream/argus-0rtsp://127.0.0.1:554/stream/argus-1

Or view them via LL-HLS in a browser:

http://127.0.0.1:888/stream/argus-0/http://127.0.0.1:888/stream/argus-1/

The demo runs on NVIDIA Jetson (L4T) with one or more CSI cameras connected.

Docker Fix for Hardware Encoding on Jetson

This release also includes a fix for a known issue where hardware H.264/HEVC encoding fails inside Docker on Jetson with JetPack 6.x. The root cause, which we investigated in depth previously, is that NVIDIA’s encoder libraries require lsmod to detect iGPU capabilities.

The Savant DeepStream Docker image now sets AARCH64_IGPU=1, bypassing the lsmod check entirely. This ensures hardware encoding works reliably inside containers on all Jetson Orin platforms.

Requirements

- Platform: NVIDIA Jetson (L4T / aarch64) only.

- Daemon: The

nvargus-daemonsystem service must be running on the host. - Docker: The container needs access to

/tmp/argus_socket(bind-mounted in the sample docker-compose) and thenvidiaruntime. - Cameras: Physical MIPI CSI-2 cameras connected to the Jetson’s CSI ports. Use

v4l2-ctl -d /dev/video0 --list-formats-extto verify supported formats and framerates before configuring the pipeline.

What’s Next

Native CSI camera input opens the door for tighter Jetson-specific integrations. If you are building robotics or embedded vision applications on Jetson with CSI cameras, we would love to hear about your use case. Join us on Discord or open an issue on GitHub.